By your command, my robot: AI war games spark debate about ethical limits

Donald Trump has ordered the termination of all contracts with Anthropic due to the technology company’s insistence on imposing red lines on the use of its technology

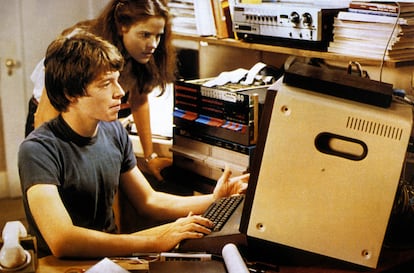

In 1983, the film WarGames became a box office hit. Starring a very young Matthew Broderick, it told the story of a teenage hacker who faces off against a supercomputer controlled by an artificial intelligence named Joshua. The machine, which managed the United States’ nuclear missile arsenal, was out of control and threatened to unleash an atomic war. Over time, the film became a cult classic for anticipating the risks of granting a machine the power of war without human oversight.

Four decades after the release of that iconic movie, the United States seems to be revisiting some of the moral dilemmas posed by director John Badham. The limits of using artificial intelligence for military purposes are fueling a global debate with real-world consequences.

U.S. President Donald Trump has ordered the cancellation of all contracts with Anthropic, a leading artificial intelligence (AI) company, in a controversial decision with political, business, and technological implications. The White House justifies its decision to exclude Anthropic by citing the refusal of its founder, Dario Amodei, to release all the functionalities of his AI tool, named Claude. Amodei has drawn two essential red lines beyond which he is unwilling to allow the use of his technology: it must not be used for the mass surveillance of citizens, nor for the operation of autonomous weapons without human control. This time, in an unprecedented move, it is the company that is setting the limits for the government.

On March 9, in response to the Trump administation’s veto, Anthropic sued the U.S. Government. “These actions are unprecedented and unlawful,” Anthropic’s lawyers said. “The Constitution does not allow the government to wield its enormous power to punish a company for its protected speech.” In addition, the lawsuit also seeks to set limits on the veto. Trump claimed that it affected all contracts with all agencies and made it impossible to contract with other companies that collaborate with the federal government. This would essentially mean the collapse of Anthropic, which has a turnover of more than $20 billion but has lucrative contracts with Google, Amazon, and other tech giants that have commercial ties to the federal government.

Anthropic argues in the lawsuit that the Trump administration has violated the company’s First Amendment rights (which guarantee freedom of speech) in speaking about the limits of the military applications of AI. “Anthropic was founded based on the belief that AI technologies should be developed and used in a way that maximizes positive outcomes for humanity, and its primary animating principle is that the most capable artificial-intelligence systems should also be the safest and the most responsible,” the company’s lawyers wrote in a complaint filed in the U.S. District Court for the Northern District of California. “Anthropic brings this suit because the federal government has retaliated against it for expressing that principle,” they added.

“Watershed moment”

“The events of the past few days have marked a watershed moment for the independence of private AI companies from the U.S. Government and have made it clear that, without legislation, the use of these tools for warfare and surveillance is not a question of if, but when,” says Adam Conner, an analyst at the Center for American Progress (CAP). “It has also been yet another example of the Trump administration’s attempt to abuse its power and take likely illegal steps to try to destroy a frontier AI lab that disagreed with the government,” he adds.

The termination of the U.S. Military’s contracts with Anthropic is a sensitive matter. To date, Claude is the only AI used by the Pentagon for managing classified files in the cloud. Military commanders used Claude during the operation to capture former Venezuelan president Nicolás Maduro and his wife in Caracas. They are also employing it in the U.S. Attack on Iran to topple the theocratic regime.

Claude, Joshua’s alter ego from the film WarGames, is essential to the Maven intelligent system. This sophisticated program, created by Palantir and Anthropic, gathers data from numerous digital sources to analyze it and assist the military in decision-making. It is the most advanced technology ever used in military operations. It is enabling the military to obtain valuable information from a vast amount of classified data from satellites, surveillance, and other digital sources, which is used for operational planning and real-time target identification in the Iran conflict.

A delicate decision

The use of Claude in this operation is so significant that it presents the Pentagon with the challenge of abandoning this AI tool once the six-month moratorium granted by Trump expires. However, several government officials acknowledge that Claude is superior to its competitors, such as ChatGPT (OpenAI), Gemini (Google), and Grok (xAI), and admit that removing Anthropic’s tools from their operations will be a complex task.

The Anthropic case raises a dilemma that affects not only warfare but also the very development of technology: where are the limits of AI, with ethical and moral questions yet to be resolved and, likely, legislated. But in the AI industry, things move so fast that questions remain unanswered before a new technological advance raises new ones. Amodei asserts that AI is improving exponentially every week, at an unprecedented speed, which fuels the risks surrounding its use.

Relations between the White House and Anthropic weren’t always strained. A year ago, Amodei’s company signed a $200 million contract with the Pentagon to manage classified files in a secure cloud environment. Claude is the most advanced AI for corporate environments, boasting higher security standards than its rivals. But on January 9, Defense Secretary Pete Hegseth unveiled a new security strategy that included a special section on promoting AI in military activities. That’s when the questions began.

Suspicions turned to distrust when the Pentagon learned of a conversation between an Anthropic employee and a Palantir employee. The former was asking if Claude had been used in the operation to capture Maduro.

The Pentagon believes that defense contractors should not place limits on the use of their services. It uses the analogy of bombs: an ammunition manufacturer doesn’t ask who a particular projectile will be fired at or under what conditions it will be supplied; it’s up to the military to determine its use. And they pose the question: “What should a government do if a private company develops a nuclear weapon using AI? That’s what we’re seeing.”

Hegseth, head of the renamed Department of War (DoW), summoned Amodei to the Pentagon to convince him that the use of Claude could not be limited and could be employed “for any lawful purpose.” This is the legal phrase employed to assure him that it would not be used for mass surveillance or unsupervised autonomous weaponry. But the solution did not satisfy Amodei. During the meeting, Hegseth gave him an ultimatum: if he did not accept the conditions within three days, he would invoke the Defense Production Act of 1950, which was used during the Korean War, to force the company to collaborate with the government without restrictions. He also threatened to designate Anthropic as a “supply chain risk,” an extraordinary measure that would prohibit the company from doing business with other military contractors.

It’s worth remembering that Hegseth, a former Fox News host, convened top military leaders in late September to explain his vision for the U.S. Armed Forces: an army composed of “warriors,” not “defenders.”

Possible errors

The verbal escalation between the two intensified. Amodei maintains that the Trump administration’s threats are contradictory. On the one hand, they claim Claude was essential, and on the other, that it is expendable. Furthermore, he clarifies that he is not categorically opposed to fully autonomous weapons, a step that could violate international law, but rather that the technology is not yet mature enough to prevent errors. Regarding surveillance, he points out that although the Pentagon assures it will comply with the law, the problem is that the legislation is not adapted to the use of AI.

The government, Amodei says, can already collect information, perform mass scanning, gather biometric data, fingerprints, tax information, intercept phone calls, and geolocate individuals, among other things. AI can aggregate this individually harmless, scattered data to obtain a complete picture of anyone’s life, automatically and on a massive scale.

Amodei offers the following example: “A swarm of millions or billions of fully automated armed drones, controlled locally by a powerful AI and strategically coordinated worldwide by an even more powerful AI, could constitute an unbeatable army, capable of both defeating any army in the world and suppressing dissent within a country by tracking every citizen.” There would be a much greater risk of democratic countries turning AI armies against their own populations, Amodei points out, expressing concern that governments could purchase massive amounts of citizen data to create AI profiles based on political affiliation, discontent, opposition, and so on.

The Pentagon, for its part, is considering the possibility of a surprise attack. “What if an intercontinental ballistic missile carrying nuclear weapons were headed toward the United States with only 90 seconds’ notice, and Anthropic’s AI were the only way to trigger a missile response to save the country, but the company’s security measures prevented it?” A senior official pondered in a phone call to Anthropic in December. Accounts of the response are contradictory. The Department of War claims that Amodei responded “call me,” a possibility that made them uneasy because it would mean leaving the military response to an attack in the hands of a businessman. Anthropic maintains that the Pentagon could use its AI tools for missile defense and cyber operations without limitations in such cases.

Before the deadline set by Hegseth expired, Trump took decisive action. “THE UNITED STATES OF AMERICA WILL NEVER ALLOW A RADICAL LEFT, WOKE COMPANY TO DICTATE HOW OUR GREAT MILITARY FIGHTS AND WINS WARS! That decision belongs to YOUR COMMANDER-IN-CHIEF, and the tremendous leaders I appoint to run our Military,” he wrote on his social media account, Truth.

In this way, the Trump administration severed all contracts with Anthropic, but allowed a six-month grace period for disengagement without causing significant disruption. That same evening, OpenAI, Anthropic’s rival, announced a $200 million contract with the Pentagon to manage classified files, similar to what Amodei’s company had done.

“Effective immediately, no contractor, supplier, or partner that does business with the United States military may conduct any commercial activity with Anthropic,” Hegseth wrote on X, following through on his threat. Anthropic announced it would sue the White House for breaking the contract and labeling it a supply chain risk. The Pentagon contract isn’t huge for a company valued at over $350 billion with annual revenue approaching $20 billion, but Anthropic has a vast client portfolio, including Amazon, Boeing, Lockheed Martin, Palantir, and others, with close ties to the U.S. Administration. If Trump keeps his word, it would be fatal for Claude. Amodei argues that the ban on contracting with other companies would only apply to military uses, which would lessen the financial blow. But that remains to be seen. CNBC reported this week that several defense technology companies are asking their employees to stop using Anthropic’s Claude and switch to other AI models.

The inclusion of Anthropic on the government’s blacklist is highly unusual for a U.S. Company. This measure had previously been used in cases such as Huawei and ZTE, with alleged ties to China, or the cybersecurity firm Kaspersky, linked to Moscow. For this reason, and because of the potential consequences of severing ties with Anthropic, the Information Technology Industry Council (ITI), an organization of technology companies including Nvidia, AMD, Google, and Apple, sent a letter to Hegseth expressing its concern about the designation of a U.S. Company as a supply chain risk.

“Even if it only directly affects Anthropic, blacklisting the company would also signal to numerous other companies what the government is willing to do to force certain actions on their products, producing a domino effect at this critical moment in technological development. The outcome could harm not only developments here in the United States, but also the global success of AI leaders like Anthropic,” explains Jennifer Huddleston, senior fellow in technology policy at the Cato Institute.

Different origins

To understand the conflict, it’s necessary to be familiar with the origins of both companies. Anthropic was founded in 2021 by seven researchers who left OpenAI on ethical grounds, alarmed by the security problems of ChatGPT. They have championed moral commitment in AI development, endowing it with a “constitution,” testing it to avoid ethical errors, and — although they have recently eased security barriers to accelerate business development — they insist that AI must be safe for society.

There’s also a political dimension. Amodei supported Joe Biden and Kamala Harris in the last presidential election. He has challenged Trump on several occasions, advocating for greater regulation of AI. He criticized Trump when the U.S. President allowed the sale of Nvidia chips to China. “It’s like selling nuclear weapons to North Korea,” he said a few weeks ago in Davos. Furthermore, he has hired several high-ranking officials from the Biden administration. Meanwhile, Sam Altman, the head of OpenAI, is a friend of Elon Musk, the founder of Tesla, and has close ties to Trump’s circle.

“Private sector governance is not enough to curb government use and potential abuse of advanced AI. Congress cannot wait to act and must begin holding hearings to investigate the administration’s actions and develop legislation to protect citizens from mass surveillance,” Conner, of the CAP, points out.

While bombs continued to fall on Tehran with Claude’s help, Amodei was locked in negotiations with the Pentagon to recover the contract broken by Trump, as reported by the Financial Times. However, there is considerable antagonism between the two sides. Emil Michael, the Defense Department’s chief technology officer, accused Amodei of being a “liar,” saying, “he wants to play God.” The businessman, meanwhile, blamed the administration’s veto on his failure to donate money to the Republican president’s cause and for “not praising Trump’s dictatorial style.”

“Terrestrial damage has been done to the industry. Even under the tightest supply chain risk designation, the U.S. Government continues to say it will treat you as a foreign adversary for refusing to comply with its business terms. Simply for having different ideas, expressing them in discourse, and materializing that discourse in decisions about whether or not to deploy one’s own property. Each of these is fundamental to our republic, and each was assaulted by the War Department last week. Most corporations, political actors, and others will have to operate under the assumption that tribal logic will now prevail,” notes Dean W. Ball, a former senior policy advisor in the White House Office of Science and Technology Policy.

Sign up for our weekly newsletter to get more English-language news coverage from EL PAÍS USA Edition

Tu suscripción se está usando en otro dispositivo

¿Quieres añadir otro usuario a tu suscripción?

Si continúas leyendo en este dispositivo, no se podrá leer en el otro.

FlechaTu suscripción se está usando en otro dispositivo y solo puedes acceder a EL PAÍS desde un dispositivo a la vez.

Si quieres compartir tu cuenta, cambia tu suscripción a la modalidad Premium, así podrás añadir otro usuario. Cada uno accederá con su propia cuenta de email, lo que os permitirá personalizar vuestra experiencia en EL PAÍS.

¿Tienes una suscripción de empresa? Accede aquí para contratar más cuentas.

En el caso de no saber quién está usando tu cuenta, te recomendamos cambiar tu contraseña aquí.

Si decides continuar compartiendo tu cuenta, este mensaje se mostrará en tu dispositivo y en el de la otra persona que está usando tu cuenta de forma indefinida, afectando a tu experiencia de lectura. Puedes consultar aquí los términos y condiciones de la suscripción digital.